Get ready to be amazed because AI tool development has come a long way since the beginning of the year. In just a few short months, Generative AI has become incredibly practical and is revolutionizing the production and post processes for video, imaging, and creative content industries. It’s truly mind-blowing!

But before we dive into the latest technological updates, let’s address the big question on everyone’s mind: “Is AI going to take my job?” It’s a valid concern, and the answer is not so clear-cut. We can’t predict the future of AI development because it’s happening at such a rapid pace. So for now, let’s take a step back and examine where we currently stand and what AI really is.

There’s been a lot of confusion about what AI actually is and why it’s called “Artificial Intelligence” if it requires human interaction. Stephen Ford on Quora summed it up perfectly with a touch of speculation and sci-fi appeal. But the truth is, we don’t know the full extent of what we’re developing right now. You can read his entire response here.

In reality, we’ve been unknowingly “feeding the machine” for decades. Ever since we started using electronic communication, our clicks, words, images, and opinions have been collected and used for data harvesting and marketing purposes. It’s been happening for a while, but with the internet and IoT, it’s happening at lightning speed. And now, developers are taking all this data and applying it to machine learning models, resulting in faster learning and more accurate results.

But it’s crucial to understand how all of this works to truly grasp the “whys” behind AI. Pinar Seyhan Demirdag of Seyhan Lee provides an excellent explanation of Generative AI in her LinkedIn course. You can check it out here.

NVIDIA’s website also sheds light on how Generative AI models function. They use neural networks to identify patterns and structures within existing data, allowing them to generate new and original content. This breakthrough has enabled organizations to leverage unlabeled data and create foundation models that can perform multiple tasks.

For example, GPT-3 and Stable Diffusion are foundation models that have revolutionized language-based applications. ChatGPT, which draws from GPT-3, can generate essays based on short text requests. Stable Diffusion, on the other hand, can generate photorealistic images from text inputs.

When it comes to generative AI models, there are three key requirements for success: quality, diversity, and speed. High-quality outputs, capturing minority modes, and fast generation are essential for interactive applications.

So, let’s be clear – generative AI is not simply a matter of copying and pasting content from the internet. It’s a complex process that will continue to evolve.

Now, let’s talk about diffusion models like Midjourney, DAL-E, and Stable Diffusion. These models determine vectors in latent space through a two-step process during training. They add random noise to training data in the forward diffusion process and reverse the noise to reconstruct the data samples in the reverse process. This allows for the generation of novel data starting from random noise.

Lastly, let’s address generative text models like ChatGPT. Zapier.com’s blog provides a simple explanation: ChatGPT understands your prompt and generates strings of words that it predicts will best answer your question based on its training data. During training, the AI is given ground rules and exposed to various situations or loads of data to develop its own algorithms.

So, as you can see, AI is a fascinating and ever-evolving field. We’re just scratching the surface of its potential, and the possibilities are endless. Stay tuned for more updates on the incredible world of AI tools!

GPT-3, the powerful language model developed by OpenAI, was trained on a staggering 500 billion “tokens.” These tokens allow the model to understand and predict text more effectively. While some tokens represent single words, longer or more complex words are broken down into multiple tokens. On average, tokens are about four characters long. Although OpenAI has not disclosed much about the inner workings of GPT-4, it is safe to assume that it was trained on a similar dataset, making it even more powerful.

The training data for GPT-3 consisted of a massive corpus of human-written content, including books, articles, and various documents covering a wide range of topics, styles, and genres. Additionally, a significant amount of content was scraped from the open internet, providing an unbelievable amount of information. In essence, GPT-3 had access to the entirety of human knowledge.

This vast dataset was used to create a deep learning neural network, modeled after the human brain. This complex algorithm allowed ChatGPT, a variant of GPT-3, to learn patterns and relationships in the text data. By predicting what text should come next in a sentence, ChatGPT was able to generate human-like responses.

But ChatGPT goes beyond simple sentence-level predictions. It can generate text for words, sentences, paragraphs, and even stanzas. It is not like the predictive text on your phone, which guesses the next word. Instead, ChatGPT aims to create fully coherent responses to any prompt. It is a powerful tool that can be harnessed for various purposes.

However, it is important to note that the results you get from ChatGPT or any other generative text model depend on how you use it. Blindly asking for help or information without any input or “training” on the topic may yield unreliable or incorrect results. Prompt engineering, as explained in the provided YouTube video, is crucial for obtaining accurate and useful responses.

Now, let’s address the question of whether AI will take your job. The answer depends on the nature of your job. If you are solely focused on one task, such as creating graphics or editing content, there is a possibility that AI will eventually replace those roles. Writing, editing, analysis, basic programming, design, content creation, conceptualization, and even voice-over artists are already at risk. It is essential to adapt, diversify your skills, and embrace the changes brought by AI to avoid becoming redundant.

From my personal experience, embracing new tools and technologies has brought me creative vigor and excitement throughout my career. I have reinvented myself multiple times over the past 40+ years, adapting to technological advancements. I am constantly looking ahead to see how the future will shape my work. So, it is crucial to constantly diversify your capabilities and stay ahead of the wave. Don’t wait for change to happen; make proactive changes in your career and income stream.

Feel free to share your thoughts on AI and the industry in the comments section below. Let’s continue the conversation!

The image displayed above is almost complete, with only a few minor adjustments needed to make it suitable for various marketing materials. The scientist’s gloves, arm, lab coat sleeve, and the vertical DNA strand are all accurately extended in the image.

Another example involves a photo taken by our team a few years ago, featuring a scientist leaning against the railing at one of our HQ buildings. Using the Object Select Tool, I easily masked her out.

By reversing the selection and inputting “on a balcony in a modern office building” in the Generative Fill panel, an impressive image was generated. It even includes reflections on the chrome and glass from the lab coat, matching the lighting direction and shadows.

Working with AI Generated Images

To fill an iPhone screen, I used a Midjourney image and outpainted the edges. (You can learn more about how the image was initially generated using ChatGPT in the Midjourney section below)

In Photoshop (Beta), I opened the image and increased the Canvas size to match the pixel dimensions of my iPhone. Then, I selected the “blank space” around the image and let Photoshop perform an unprompted Generative Fill, resulting in the images below:

Using Photoshop (Beta) Generative Fill to zoom out to the dimensions of the iPhone screen:

I was truly amazed that the generated images maintained the style of the original AI art and even enhanced it with “fantasy” elements as they doubled in size.

For a helpful tutorial on using both the Remove Tool and Generative Fill to modify your images in Photoshop (Beta), check out this video from my colleague, Colin Smith from PhotoshopCAFE:

Midjourney 5.2 (with Zoom Out fill)

Midjourney has undergone significant improvements in its latest build, v5.2, which I want to highlight here.

With v5.2, the image results are much more photorealistic, although they may lack the “fantasy art” and super creative elements we were accustomed to in previous versions. However, let’s take a moment to appreciate the improved quality.

Here’s a comparison from June 2022 in Midjourney, where the faces, hands, and overall rendering quality were much lower, to v4 in February 2023, and finally to the current state in June 2023. All these images were generated straight from Midjourney (Discord).

My prompt for all three images remained the same: “Uma Thurman making a sandwich Tarantino style”. (Don’t ask me why – it was just a random prompt that I thought would be funny at the time. I can’t remember if I was sober or not.) 😛

Generative AI Tools 18″>

When I wrote my first article in this series, AI Tools Part 1: Why We Need Them, I used a prompt generated by ChatGPT. The prompt was about a spaceship facing a malfunction while on a mission to save humanity. The crew had to work together to overcome obstacles and ensure their survival.

Using the same prompt in v5.2 of ChatGPT gave me a completely different result. The image generated was based on another prompt I had used months ago. I decided to try out the new Zoom-out feature in v5.2, which allows you to expand an image and create something new. I used this feature to create variations of the original image, each time zooming out further.

Here’s a tutorial on how to use the Zoom-out feature in v5.2:

It’s fascinating to see where ChatGPT takes an image when you use the Zoom-out feature. There are multiple options to explore and further zoom in on.

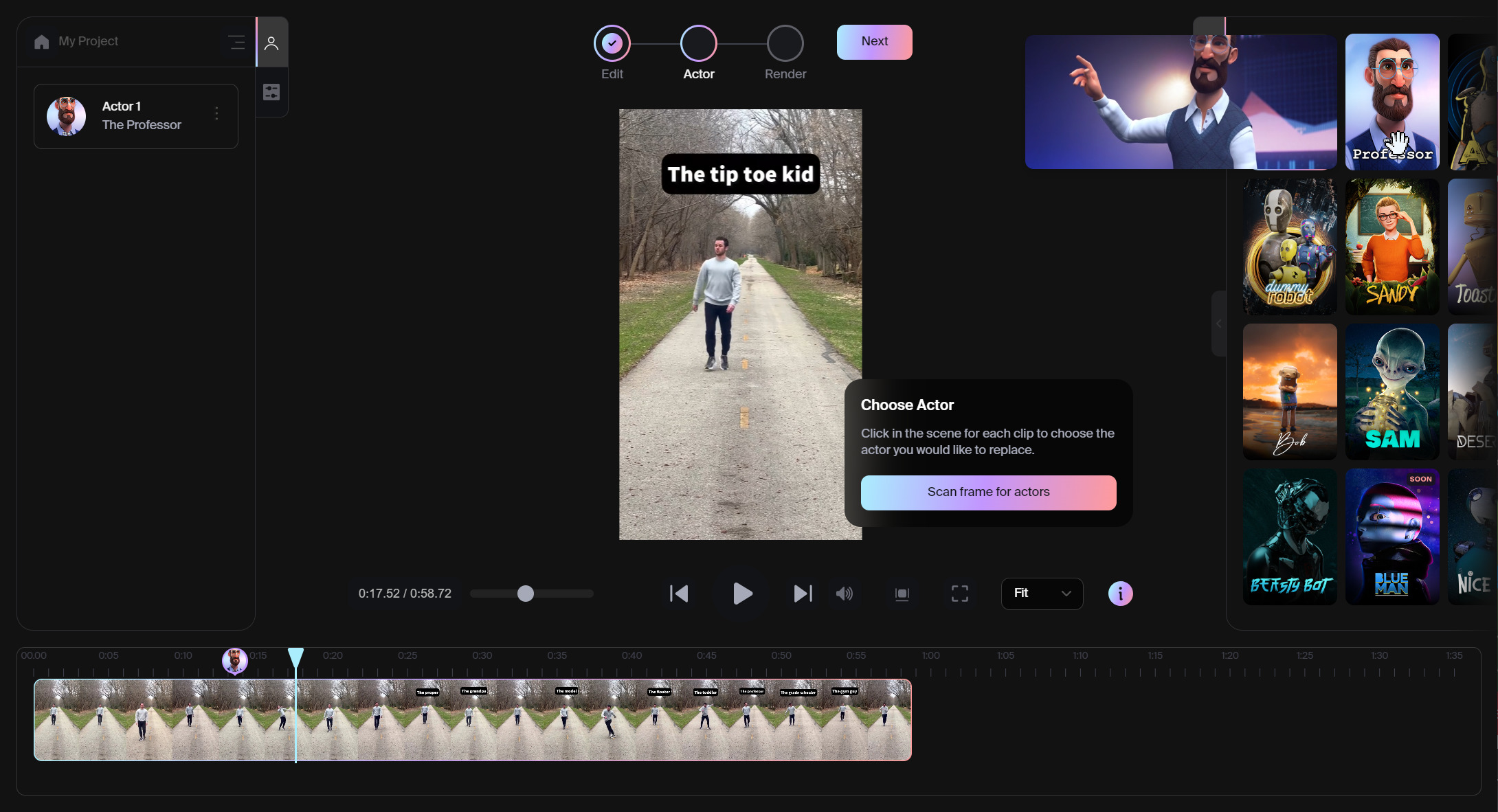

Another exciting tool is Wonder Studio by Wonder Dynamics. It’s an AI tool that automatically animates and composes CG characters into live-action scenes. You can choose from pre-made characters or add your own rigged 3D characters. Wonder Studio simplifies the animation process and eliminates the need for motion tracking markers and roto work.

Wonder Studio can generate various outputs for your production pipeline, such as a Clean Plate, Alpha Mask, Camera Track, MoCap, and even a Blender 3D file. It’s a versatile tool that can enhance your workflow and save time.

Overall, these generative AI tools offer exciting possibilities for creativity and innovation in various industries.

I was blown away by this viral video from @DanielLaBelle on YouTube, showcasing Various Ways People Walk. I decided to put Wonder Studio to the test and see how closely it could replicate his motion. Take a look at the side-by-side results and judge for yourself. It’s incredible to think that this video was rendered entirely in Wonder Studio on a web browser, taking only 30-40 minutes to complete.

While there are some artifacts and blurriness in certain scenes where the original actor was removed or not fully captured, it’s still an impressive feat. Check out this action scene shared by beta tester Solomon Jagwe on YouTube, where the AI-generated actor momentarily “pops on screen.”

ElevenLabs AI

Exciting updates from ElevenLabs – the AI Speech Classifier and the Voice Library.

AI Speech Classifier: A Step Towards Transparency (from their website)

Introducing our groundbreaking authentication tool – the AI Speech Classifier. This unique verification mechanism allows you to upload any audio sample and determine if it contains ElevenLabs AI-generated audio.

The AI Speech Classifier is a significant advancement in our mission to track AI-generated media effectively. With this launch, we aim to strengthen our commitment to transparency in the generative media space. Use our AI Speech Classifier to detect if an audio clip was created using ElevenLabs. Feel free to upload your sample below. Please note that only the first minute of audio files longer than 1 minute will be analyzed.

A Proactive Stand against Malicious Use of AI

As creators of AI technologies, we take it upon ourselves to promote education, ensure safe usage, and maintain transparency in the generative audio field. We want these technologies to be accessible to all while prioritizing security. The AI Speech Classifier is just one of the ways we provide software to support our educational efforts, such as our guide on the safe and legal use of Voice Cloning.

At ElevenLabs, our goal is to develop safe tools that enable the creation of remarkable content. As an organization, we have the ability to establish and enforce safeguards that are often lacking in open-source models. With this launch, we also empower businesses and institutions to enhance their own safeguards using our research and technology.

Community Voice Library

The Voice Library is a vibrant community space where users can generate, share, and explore an infinite range of voices. Powered by our proprietary Voice Design tool, the Voice Library brings together a global collection of vocal styles for endless applications.

Browse and utilize synthetic voices shared by others to discover new possibilities for your own projects. Whether you’re creating an audiobook, designing a video game character, or adding depth to your content, the Voice Library offers limitless potential. If you find a voice you like, simply add it to your VoiceLab.

All voices in the Voice Library are purely artificial and come with a free commercial use license.

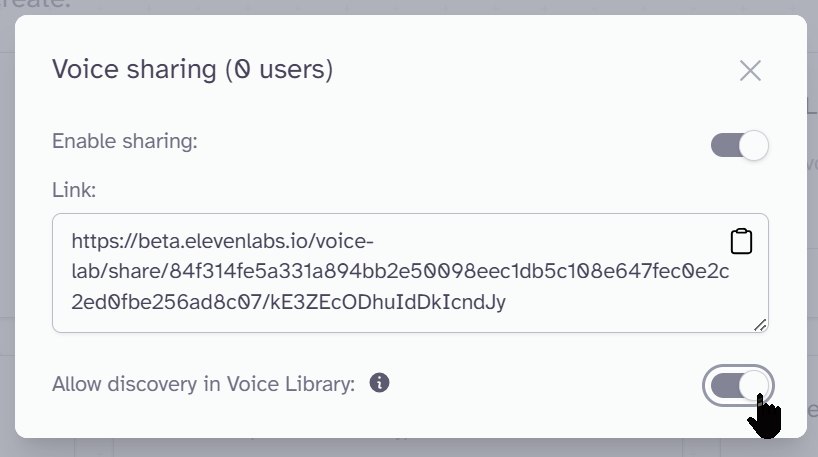

In addition to making your generated voices shareable, they can now be part of the extensive Voice Library on the ElevenLabs site (with any level of paid account).

Sharing via Voice Library is a breeze:

- Go to VoiceLab

- Click the share icon on the voice panel

- Toggle enable sharing

- Toggle allow discovery in Voice Library

You can disable sharing at any time. If you do, your voice will no longer be visible in the Voice Library, but users who have already added it to their VoiceLab will retain access.

Here are just a few examples of the hundreds of voices available in the library: